📢 We're hosting Project Guideline runs in Yokohama, Mie, and Tottori! Check out our regular running events page for more information

Project Guideline: Towards helping everyone to run freely

Project Guideline is an early-stage research project exploring how Google AI can help people with visual impairments run freely by themselves.

“In Japan, around 60 percent of the population exercises routinely. However, that number drops to around 30 percent amongst people with visual impairments.”

Enjoying sport and having the opportunity to participate is a right granted to everyone by law. However, this right is not always a reality for those with various disabilities. For example, the simple freedom to run alone is an almost impossible dream for those with visual impairments.

“Is there a way to use technology to enable runners with visual impairments to run by themselves?”

This question was posed to us by Thomas Panek, President and CEO of Guiding Eyes for the Blind and accomplished marathon runner in the United States, and it became our starting point. Everyone should be able to pursue their own physical potential freely and independently. We started this experiment as the first small step toward achieving such a society.

We believe in building products that work for everyone. Making use of now widely adopted smartphones and headphones, we built the first prototypes together with Thomas and announced Project Guideline in the United States in 2020.

As Parasports and sports in general gained more attention in 2021, we announced the next stage of the project in Japan. Masamitsu Misono, a technology advocate for the blind community, accomplished runner, and person who is blind totally, was our first partner.

We believe in building products that work for everyone. Making use of now widely adopted smartphones and headphones, we built the first prototypes together with Thomas and announced Project Guideline in the United States in 2020.

As Parasports and sports in general gained more attention in 2021, we announced the next stage of the project in Japan. Masamitsu Misono, a technology advocate for the blind community, accomplished runner, and person who is blind totally, was our first partner.

Link to Youtube Video (visible only when JS is disabled)

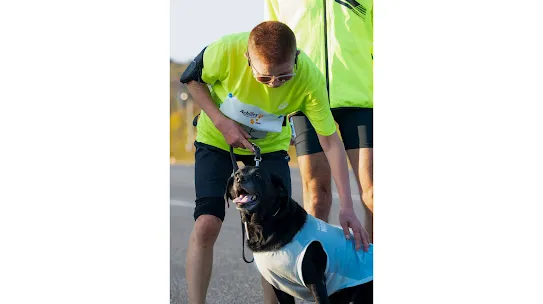

Towards a world where everyone can pursue their fullest potential

In 2022, we got together with NPO Achilles International Japan, an organization that connects people with disabilities and able-bodied people to enjoy running and walking together. We helped visually impaired runners participate in the ASICS World Ekiden 2022, a virtual Ekiden race that connects digital sashes in teams of six, without any escort runners. As a result, all six runners ran their segment with the help of Project Guideline and completed the 42.195km in 4 hours 29 minutes 44 seconds. The team competed equally against other teams of able-bodied athletes from all over the world.

There are countless ways to enjoy running. You can run by yourself , run with a friend or compete with like-minded runners. It shouldn’t matter if a person has a disability or not. We had the opportunity to demonstrate how a team of visually-impaired runners could compete equally against teams of able-bodied athletes through a virtual race and with Project Guideline’s technology. The challenge highlights how Project Guideline has the potential to help everyone pursue their fullest potential.

There are countless ways to enjoy running. You can run by yourself , run with a friend or compete with like-minded runners. It shouldn’t matter if a person has a disability or not. We had the opportunity to demonstrate how a team of visually-impaired runners could compete equally against teams of able-bodied athletes through a virtual race and with Project Guideline’s technology. The challenge highlights how Project Guideline has the potential to help everyone pursue their fullest potential.

Link to Youtube Video (visible only when JS is disabled)

In 2024, Project Guideline took a significant step forward with the support of the Yokohama Raport, a sports and cultural center for people with disabilities in Yokohama. We installed Project Guideline in the underground track at Yokohama Raport and now regularly hold programs where visually impaired runners can experience it. This allows more runners to experience the freedom of running independently and provides us with valuable feedback to further improve the technology. Originally launched in Yokohama, these running sessions have now expanded to Mie and Tottori Prefectures. For details, please see our announcement on regular running events.

Link to Youtube Video (visible only when JS is disabled)

How Project Guideline works

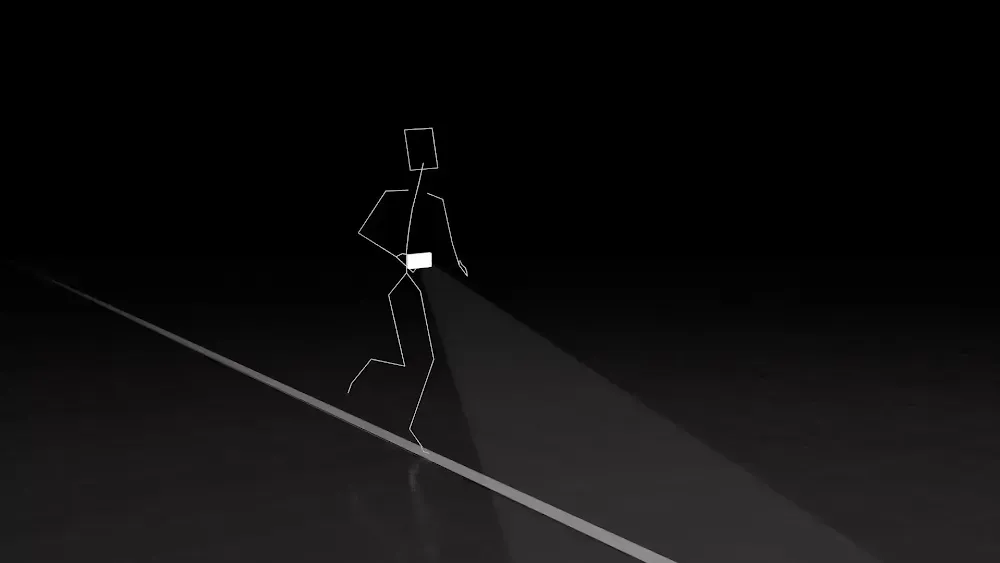

Project Guideline uses image recognition technology leveraging on-device machine learning that runs on Android smartphones. It identifies a colored line on the ground, determines whether the line is to the left, right or center of the runner, and sends audio signals through headphones worn by the runner. Based on that feedback, the runner can correct their running position to stay on the line and enjoy their run.

It’s easy for the human eye to identify a line on the road, but asking a machine to process that information is no easy task. The camera worn by the runner shakes constantly, and when outside, the direction and brightness of light changes continually. Shadows and leaves can cover the course, and even the color of the ground itself is not constant. You also have to address any curves in the course.

To make accurate decisions under these varying conditions, we developed an image recognition AI model that utilizes TensorFlow™, an open-source library for machine learning published by Google. We have improved the accuracy and performance of the system in varying conditions, by gathering video data from as many situations as possible to train the model to recognize different environments. In addition, we have combined it with technologies such as Google's ARCore augmented reality (AR) platform to further improve spatial recognition capabilities, allowing us to develop features such as notifying runners of curves in advance and detecting obstacles in the future.

Contributing to the Community through Open Source

In 2023, we open-sourced Project Guideline as a way to contribute to the community of people working on technological innovation, primarily in the field of accessibility. The source code of the core technology we developed, as well as the pre-trained image recognition model and 3D simulator, are available for free to anyone. This allows developers and researchers around the world to use Project Guideline's technology for new accessibility initiatives or even apply it to technical development in completely new fields.

The Joy of an Expanding World, for Everyone.

Sighted or visually impaired, let's play together! The No-Look Sports Day.

Visually impaired children often have limited opportunities to participate in sports. To address this, we held a "No-Look Sports Day" in November 2024, creating a fun and accessible environment where they could experience the joy of movement and play alongside their families and friends.

In collaboration with the World Yuru Sports Association (Yuruspo), a non-profit organization that promotes new sports enjoyable for people of all ages, genders, and athletic abilities, we designed a range of innovative sports activities. These activities utilized Google's research projects, including Project Guideline, and accessibility features. The result was a truly inclusive sports event where everyone, sighted, with low vision, or blind, athletic or not, could participate and have fun using technology. Our hope is that through technology, we can contribute to a world where everyone can experience the joy of an expanding world.

In collaboration with the World Yuru Sports Association (Yuruspo), a non-profit organization that promotes new sports enjoyable for people of all ages, genders, and athletic abilities, we designed a range of innovative sports activities. These activities utilized Google's research projects, including Project Guideline, and accessibility features. The result was a truly inclusive sports event where everyone, sighted, with low vision, or blind, athletic or not, could participate and have fun using technology. Our hope is that through technology, we can contribute to a world where everyone can experience the joy of an expanding world.

Link to Youtube Video (visible only when JS is disabled)

Join our Regular Runs

We've partnered with organizations to offer programs where you can experience Project Guideline. Visit the individual program pages to learn more about each event.

Partnerships

Project Guideline is an on-going project that continues to incorporate new technology and improve user experience. We recognize the importance of involving members of the visually impaired community in the design and engineering processes, which is why we work with visually impaired runners to test Project Guideline and receive direct feedback on how to make the system safer and more user-friendly. To achieve this, we are collaborating with the following partners and conducting events and field tests for data collection and user feedback.

Similarly, we are seeking partners nationwide to introduce Project Guideline to facilities and implement programs for visually impaired runners. If you are interested in this program for your municipality or organization, please contact us via the Google Form.